David Kravets

March 05, 2026

11 min read

March 05, 2026

11 min read

A year is an eternity in AI time.

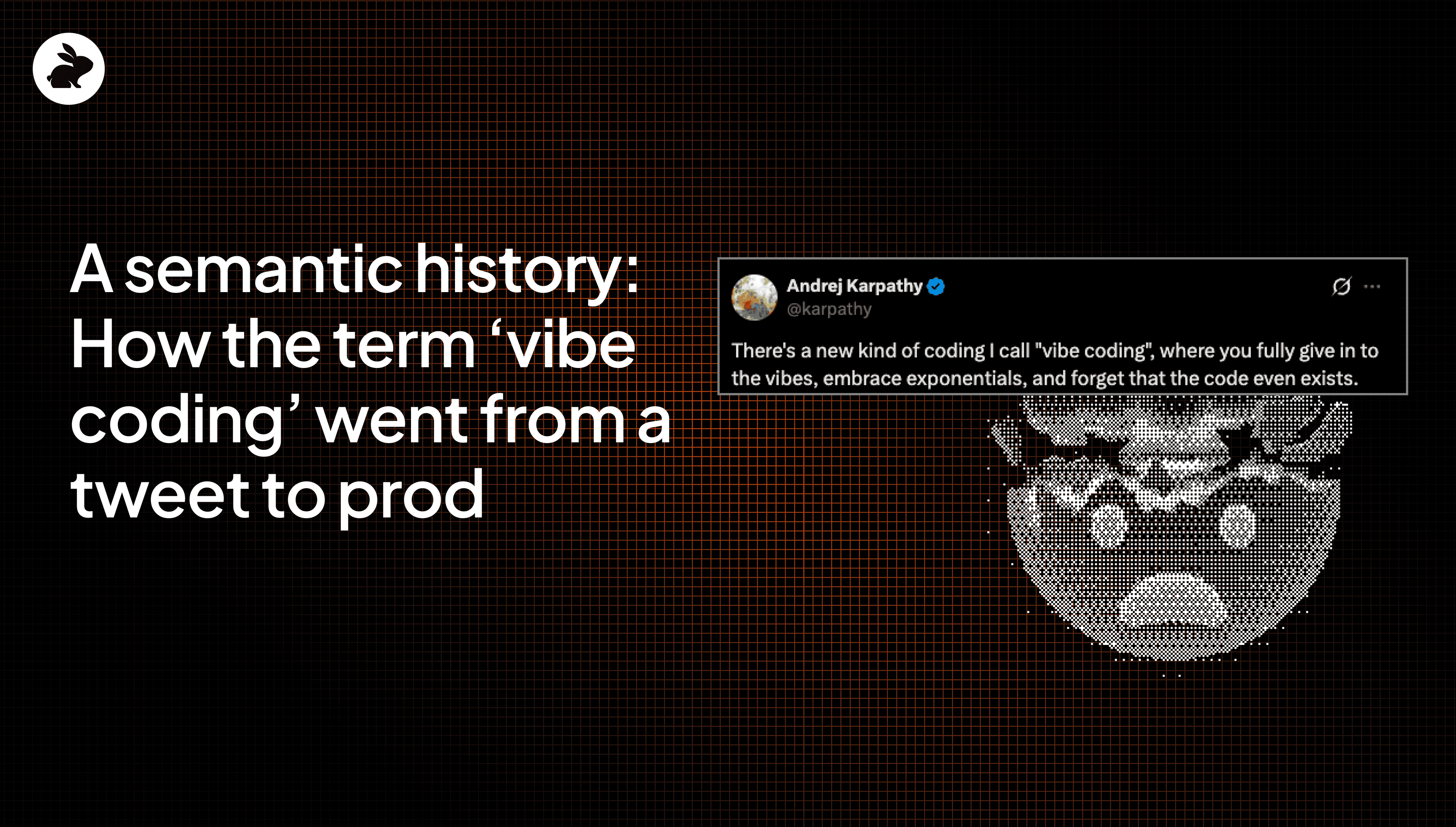

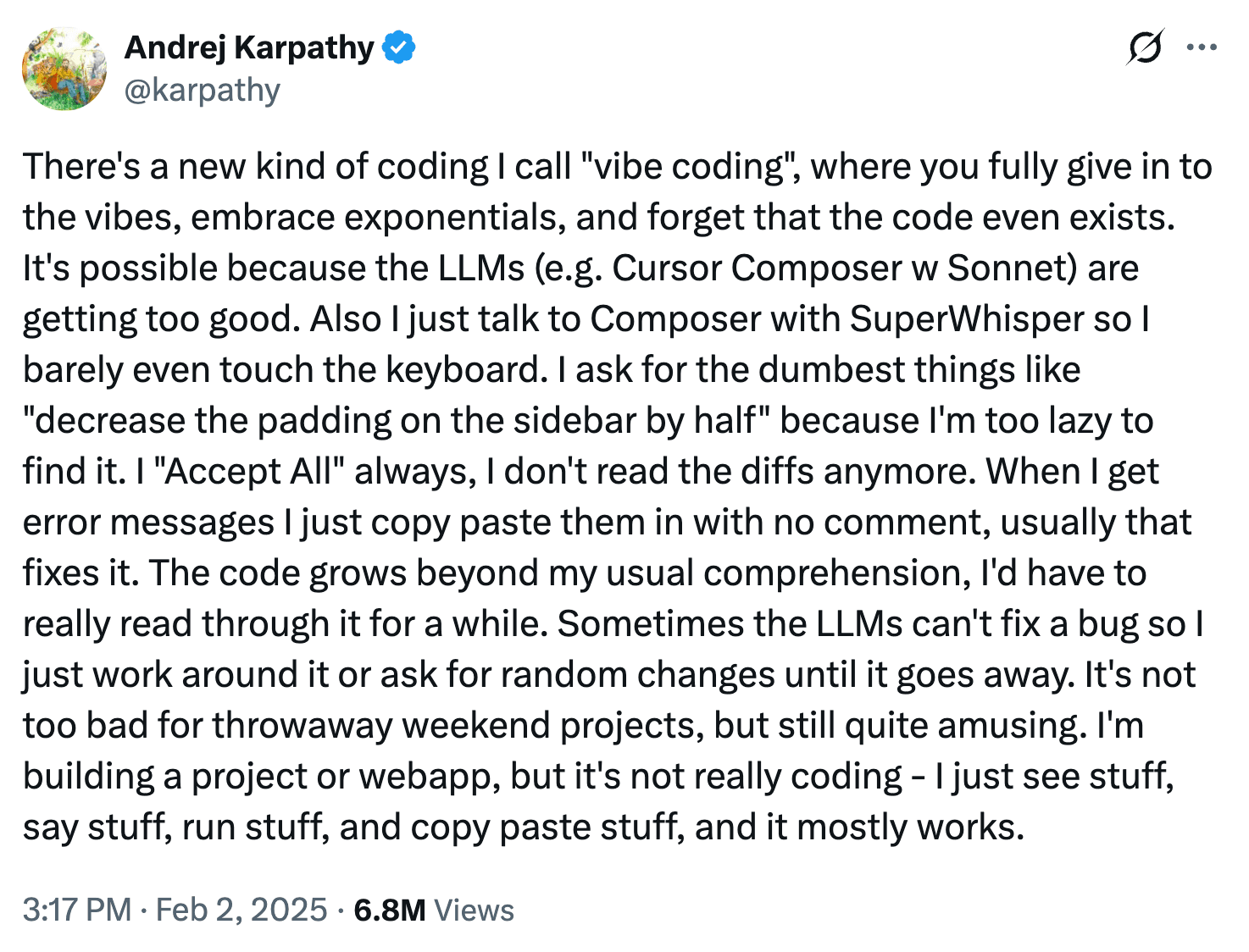

In February 2025, Andrej Karpathy dropped a tweet-sized cultural marker into the software world: “vibe coding.” The phrase stuck because it captured a visceral shift in the developer experience. Instead of grinding through syntax, you describe an intent in plain English, watch the code manifest, and nudge the output until reality matches your vision.

In this initial vision of vibe coding, Karpathy famously framed it as engineering where you, “fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

Karpathy’s point wasn't just that AI could help you code. We’ve had copilots for years. The point was that AI made it tempting to treat code as a steerable draft rather than something requiring line-by-line authorship.

One year later, the "vibe" has evolved.

Vibe coding doesn’t just refer to what we’re doing when coding a hobby project or slapping together a prototype anymore. We are managing an explosion of machine-generated logic destined for production systems, and we’re often calling that vibe coding, too. But is it really vibe coding? And if it’s not, what should we be calling it? And how should we be treating it in production systems?

When vibe coding was honored as a 2025 Word of the Year by Collins Dictionary, it signaled that the workflow had gone mainstream. But as the phrase broadened, so did the stakes.

Vibe coding today has become shorthand for any prompt-driven development, rather than the specific prompting experience Karpathy originally described. This drift isn’t a linguistic accident.

More than anything, it’s a signal that many engineering teams have added AI to their stacks and are struggling with the quality of its output and its downstream effects. In fact, the use of vibe coding to describe the generation of code for production-grade systems has taken on somewhat negative connotations.

The term was invoked by many precisely to emphasize that, in some cases, relying too much on AI-generated code at work was trusting an LLM’s vibes in a way better suited for a weekend project, rather than for customer-facing applications of a publicly listed company.

For example, as AI coding agents were adopted by more teams in 2025, devs on LinkedIn renamed themselves Vibe Code Cleanup Specialists in their profiles (a joke we ran with for our AWS Re:invent booth this year). What’s more, Collins’ competitor Merriam Webster went in another direction for their word of the year choice. They chose slop, highlighting the large gulf that still existed between AI optimism and AI output.

Indeed, for many, the promise of AI coding agents have translated into hours spent reviewing AI slop, reworking code due to unclear prompts or intent, and dealing with incidents or bugs in production.

As we move from "throwaway weekend projects" (as Karpathy originally described his use of vibe coding) to core infrastructure, the constraints of software development have shifted. We no longer have a creation problem. We have a confidence problem. And that lack of confidence was reflected in how the word came to be used throughout 2025 for almost all uses of AI for coding, even those at work.

Some of the biggest tech companies brag about how a growing amount of their code, if not all, is written by AI. That can’t be accomplished by simply forcing devs to use AI. Many, if not most devs, appreciate the time and cognitive savings of using AI agents for certain tasks and types of code.

But that’s not the full story. For many devs, AI coding agents often actually equal more work, and that’s measurable in how the most experienced people in the room are spending their hours.

Fastly’s recent survey of 791 professional developers illustrates this shift in real-time. The data shows that senior developers are far more likely to ship large amounts of AI-generated code.

Volume: Roughly one-third of senior developers report that about half their shipped code is now AI-generated (compared to just 13% of juniors).

The review tax: Nearly 30% of senior devs report that editing and auditing AI output offsets most of their initial time savings from AI.

This tells us that the most experienced engineers aren't using AI to work less. They are using it to manufacture more complexity, which they then have to manage. That’s not just because they’re coding more. It’s also because reviewing AI code is simply harder. Our own State of AI vs. Human Code Generation study, for example, found that AI generated code had 1.7x more bugs and issues and 1.4x more critical issues.

Today, where it seems like everyone is vibe coding at work, code review is no longer a predictable ritual at the end of a sprint. Now, it is the primary mechanism that makes high-velocity engineering safe. Or, more so, it’s the area where issues and bugs now slip through more frequently and requires significantly more time and attention to get right.

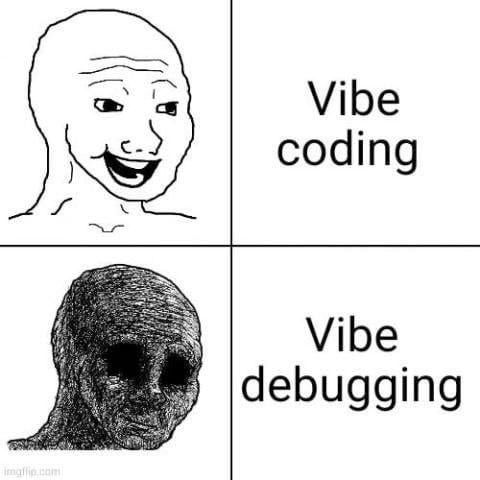

In a way, it must feel to some developers like a Faustian bargain. In return for manually writing less code, they’re having to manually review more of it. And those reviews have increasingly become stressful, load bearing stages of the software development lifecycle.

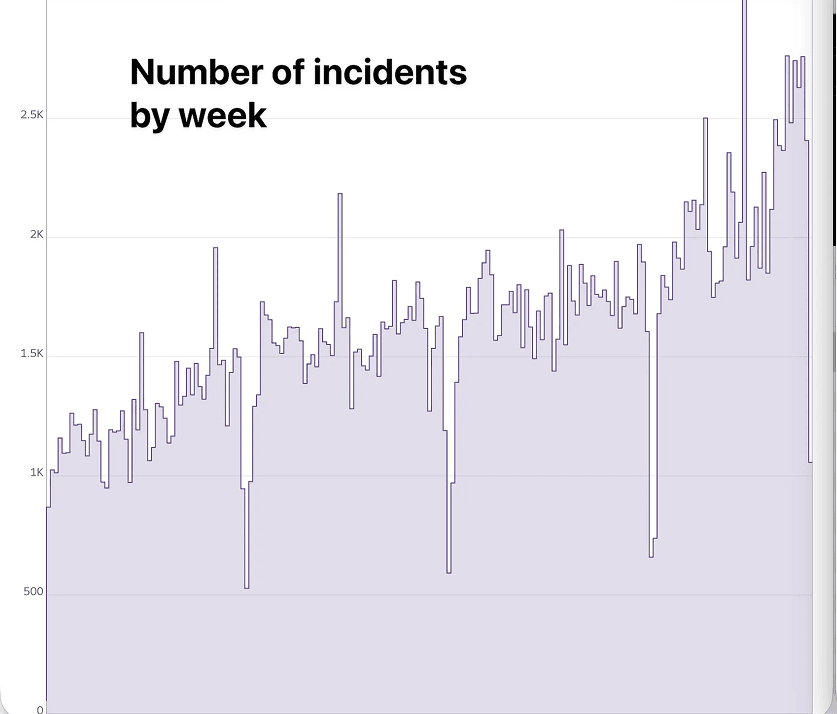

But one glance at the surge of headlines about incidents clearly shows that the normal review cycle (and likely senior devs) are breaking under the added load. The difficulty of finding all the additional issues AI generates is leading to a wave of incidents that are suspected to be from or have been traced back to AI-generated code.

As AI-generated code moved from prototypes into production systems, incident data began to reflect the strain. Industry outage tracking in early 2025 showed a measurable spike in global service disruptions during peak AI adoption months, before stabilizing later in the year. That analysis raised a harder question: not whether AI can generate code, but whether teams are verifying it rigorously enough before it ships.

At the same time, teams reported a growing review burden. Faster generation meant more logic to inspect, more edge cases to reason through, and more subtle regressions to catch. When verification practices don’t scale at the same rate as output, small defects are more likely to escape into production.

Recent high-profile events reinforced the point. For example, news reports detailed an AWS disruption in which an internal AI coding assistant was involved in changes that contributed to a 13-hour interruption of a cost-management service. While misconfigured access controls, not autonomous AI behavior, were later attributed as the root cause, it was, ultimately, the AI who made the change that took down the system.

Similarly, Moonwell recently dealt with an incident that saw it accidentally issue $1.8 million in bad debt after an incident that many are suggesting was caused by AI-powered development.

Kimi chatbot experienced reliability issues and outages amid surging demand as the company scaled aggressively, highlighting how systems can strain when rapid AI adoption outpaces infrastructure maturity.

This is where “vibe coding” shifted in tone further. What began as a playful description of prompt-driven creation took on an even more skeptical edge in production contexts. Not because engineers reject AI leverage, but because incidents make the verification gap visible. When change accelerates without proportional review rigor, risk increases and devs become frustrated.

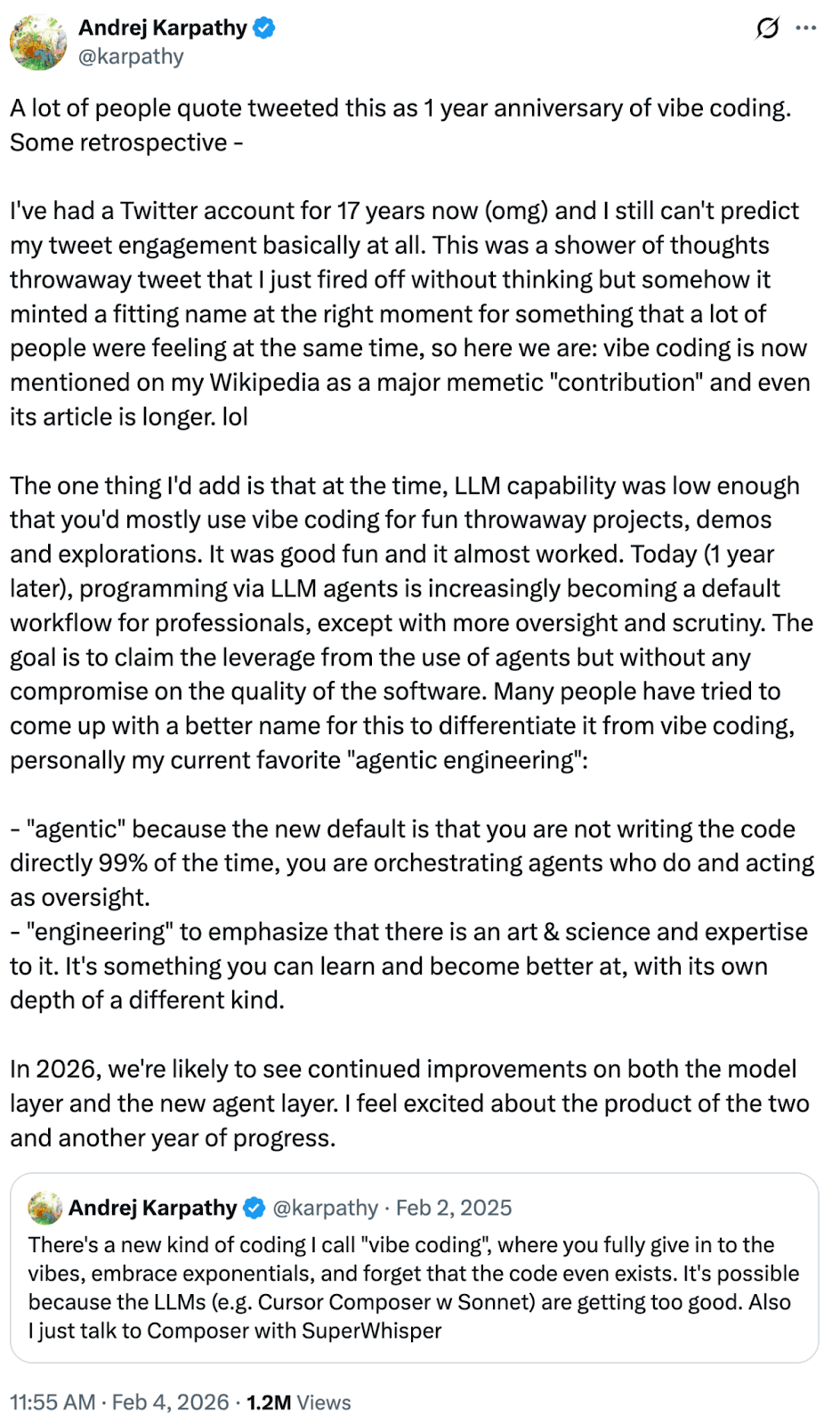

Karpathy recently refined his vibe coding thesis, noting that while the vibe started as an exploration, it has matured into what he and others describe as “agentic engineering.”

This seems like an attempt on Kaparthy’s part to decouple AI-driven development from the baggage that the term vibe coding carries. But it’s also meant to mark a different kind of workflow that requires a fundamental shift in how we define technical oversight. As Karpathy recently noted on X, the industry is moving toward a model where speed and rigor are no longer mutually exclusive.

“Today (1 year later), programming via LLM agents is increasingly becoming a default workflow for professionals, except with more oversight and scrutiny. The goal is to claim the leverage from the use of agents but without any compromise on the quality of the software,” Karpathy recently wrote on X.

This new paradigm is meant as a distinction from the vibe coding era. The job is no longer to coach the model or to vibe with it but to plan for and verify its logic.

Whether “agentic engineering” will catch on as the main way to describe AI-driven development or whether the derogatory use of vibe coding in a professional context will continue remains to be seen.

Here’s the optimistic reading of the last year.

Vibe coding didn’t break engineering, even if it did increase incidents. Instead, it forced us to define what the craft actually is and imagine how it might evolve in the coming years. That’s led some to talk about how we’re on the cusp of a new golden age of software engineering, and others to proclaim that developers are dead.

Whether autonomous agents will ever replace developers is still highly contested. But what’s crystal clear is that the future of vibe coding as a word is entirely dependent on solving the quality problem AI-driven development currently faces. That’s the only way that “vibe coding” is set to become less derogatory and have a chance of evolving into Karpathy’s preferred term for it: “agentic engineering.”

But to get there, we’ll need to adopt and perform rigorous “vibe checks” as an industry (that’s our suggestion for the 2026 word of the year, if you’re listening Collins and Merriam Webster).

In its original cultural context, a vibe check is a gut-check, a moment of scrutiny to see if something is as real as it claims. At CodeRabbit, we first used this term in May 2025 to describe how our AI code reviews can help ensure your code’s quality.

In 2026, it’s our belief that rigorous vibe checks must become a technical standard. The same tipping points we saw around the adoption of agentic AI in 2025 must happen in 2026 around tools that are focused on verifying and testing AI generated code.

These vibe checks can include a number of different safeguards and systems designed to keep AI slop and issues from interfering with your production system, including things like AI code review. A vibe check is, essentially, your quality gate, something AI code makes more critical than ever before.

We might have fallen in love with vibe coding because it named a feeling, the thrill of code appearing faster than the brain can keep up. But going forward, we need less vibing and more checking. That’s the only way vibe coding grows up.

Need a vibe check? Try CodeRabbit free today.