Sahil Mohan Bansal

March 11, 2026

5 min read

March 11, 2026

5 min read

Cut code review time & bugs by 50%

Most installed AI app on GitHub and GitLab

Free 14-day trial

TL;DR: NVIDIA Nemotron 3 Super delivers high accuracy and faster throughput in CodeRabbit's self-hosted AI code reviews.

We are happy to share that CodeRabbit is expanding its support for the NVIDIA Nemotron family of open models, upgrading from Nemotron 3 Nano to Nemotron 3 Super for the context gathering and summarization stage of our AI code review workflow. This upgrade is available for CodeRabbit's self-hosted customers running our container image on their own infrastructure.

Nemotron 3 Super is used to power the context gathering and summarization stage before the frontier models from OpenAI and Anthropic are used for deep reasoning and generating review comments for bug fixes. With Nemotron Super, that review foundation just got significantly more capable.

We tested Nemotron 3 Super as a follow-up to our initial support of Nemotron 3 Nano, where we reported that a blend of open and frontier models allows us to improve the overall speed of context gathering and cost efficiency by routing different parts of the review workflow to the appropriate model family especially in the PR Summarization phase of code reviews.

Nemotron 3 Super's larger context window and ability to run multi-token prediction (MTP) made it well-suited for the token-hungry task of context summarization. As our code review workflows grow more agentic and complex, we've run into two constraints that Nemotron 3 Super helps to address.

Context explosion: Multi-agent workflows generate significantly more tokens than standard interactions because each step requires context from tool outputs, intermediate reasoning, repo signals, and more. Over the course of a long review, this volume of context increases cost and risks goal drift.

Thinking tax: Complex agentic tasks require reasoning at every step, but routing every sub-task to a large frontier model makes the pipeline slow and expensive. The ideal solution is a mix of models where the reasoning model aligns with the type of task without escalating to the heaviest model available.

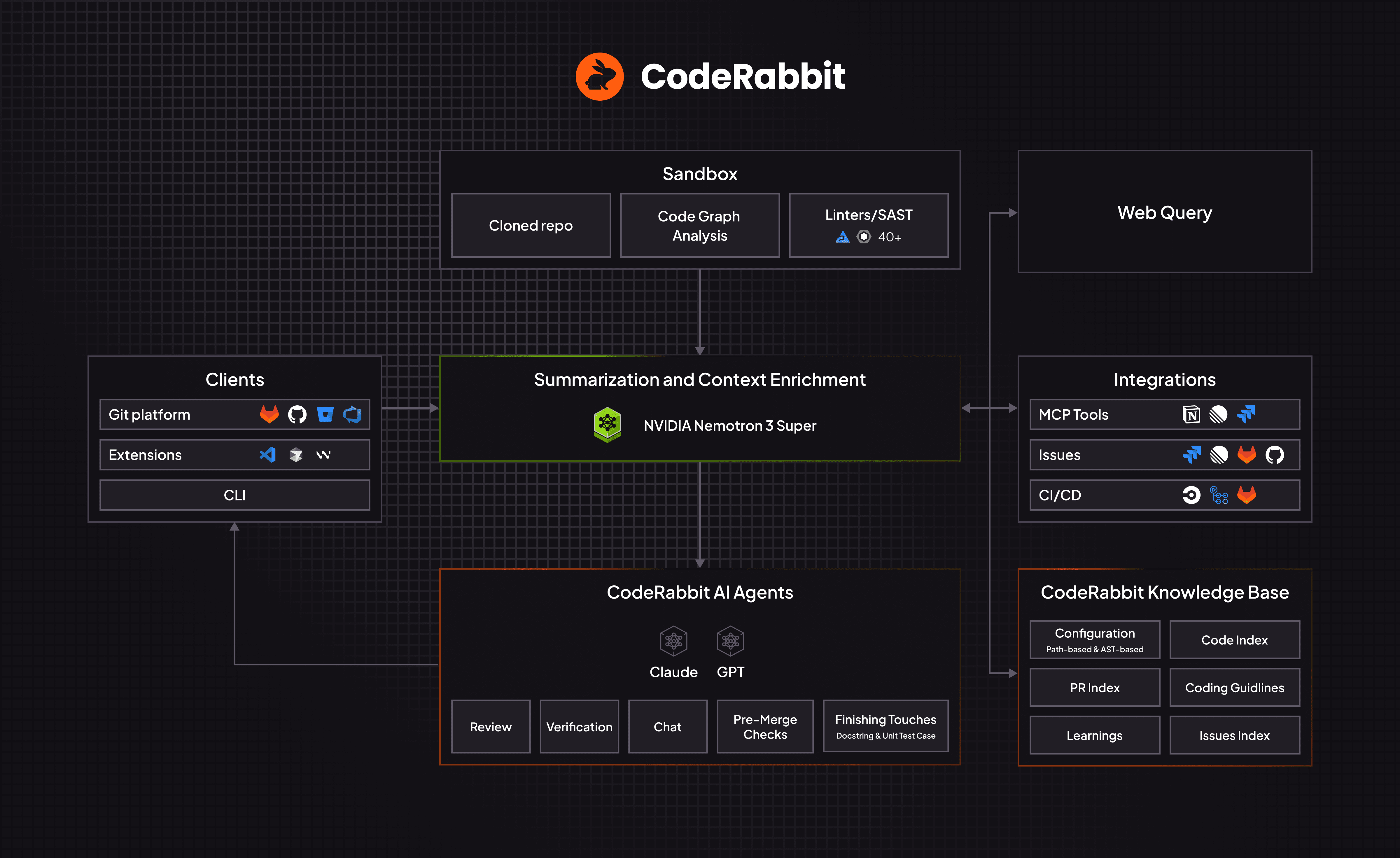

CodeRabbit architecture: using Nemotron Super for context gathering & summarization

This context building stage is the workhorse of the overall AI code review process and it is run several times iteratively throughout the review workflow. NVIDIA Nemotron 3 Super helps us with high-efficiency tasks and its large context window (1 million tokens) along with fast speed helps to gather a lot of data and run several iterations of context summarization and retrieval. Running these iterations many times iteratively throughout the code review cycle helps to enhance the review quality and lower the signal-to-noise ratio.

When you open a Pull Request (PR), CodeRabbit’s code review workflow is triggered starting with an isolated and secure sandbox environment where CodeRabbit analyzes code from a clone of the repo. In parallel, CodeRabbit pulls in context signals from several sources:

Code and PR index

Linter / Static App Security Tests (SAST)

Code graph

Coding agent rules files

Custom review rules and Learnings

Issue details (Plan details, Jira / Linear / Github tickets)

Public MCP servers

Web search

A lot of this context, along with the code diff being analyzed, is used to generate a PR Summary before any review comments are generated.

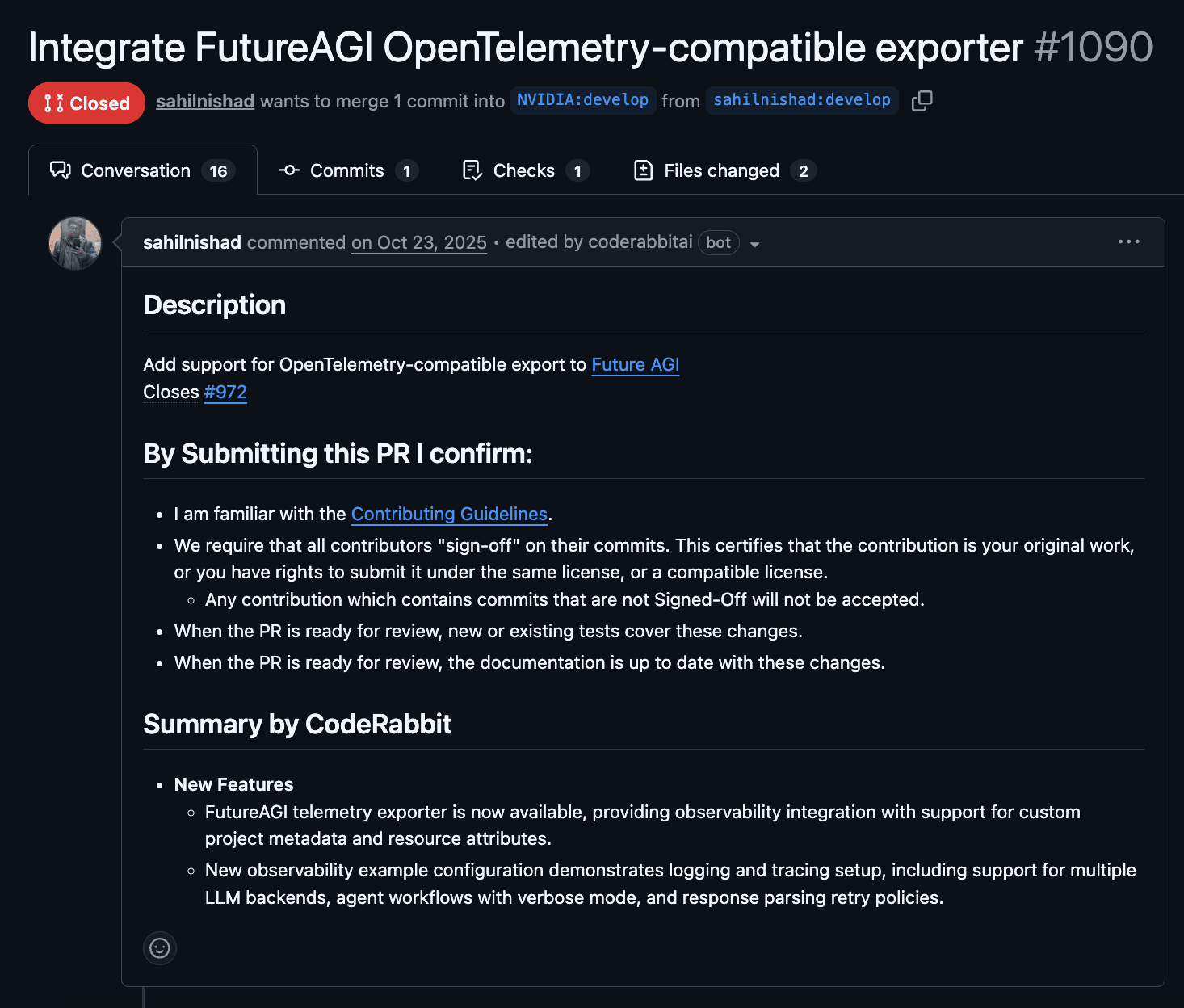

PR Summary generated by CodeRabbit, powered by Nemotron 3 Super

Summarization is at the heart of every code review and is the key to delivering high signal-to-noise in the review comments. Nemotron 3 Super is a 120-billion-parameter open model with 12 billion active parameters at inference. Its hybrid Mixture-of-Experts (MoE) architecture with transformer layers handling the reasoning and Mamba layers handling the high-volume, repetitive work of context processing during review summarization, which is critical for our code reviews.

Predicting multiple tokens simultaneously also resulted in meaningfully faster inference which speeds up review summarization. All other code review tasks flow downstream from summarization. The faster the review summarization, faster the overall code review. Nemotron Super delivers much faster performance than Nemotron 3 Nano.

Nemotron 3 Super can also hold a large codebase context including context from external sources (jira tickets, logs, project requirement docs, etc.) without losing state across long tasks.

CodeRabbit now supports Nemotron 3 Super (initially for its self-hosted customers) for the context summarization part of the review workflow, while the frontier models from OpenAI and Anthropic focus on finding hidden bugs. For customers this means faster PR summarization, faster code reviews without compromising quality.

We are also delighted to support the announcement from NVIDIA today about the expansion of its Nemotron family of open models and are excited to work with the company to help accelerate AI coding adoption across every industry.

Get in touch with our team to access CodeRabbit’s container image if you would like to run AI code reviews on your self-hosted infrastructure.