David Kravets

May 05, 2026

8 min read

May 05, 2026

8 min read

Cut code review time & bugs by 50%

Most installed AI app on GitHub and GitLab

Free 14-day trial

Engineering teams have standards.

The problem is that many of those standards still live in wikis, onboarding docs, architecture pages, and reviewer memory. Somewhere, there is guidance on how services should communicate, what tests should accompany a risky change, which dependencies are acceptable, and what good pull requests look like.

Senior engineers know the patterns. Experienced reviewers can usually tell when something feels off. Newer team members learn the rules by absorbing comments, examples, and a fair amount of folklore.

For a long time, teams could get by with that. It was imperfect, but workable.

As code production shifts from manual to generated, the cost of staying there compounds quietly. Standards erode faster than anyone updates the wiki. Review comments relitigate the same decisions quarter after quarter. The gap between what the architecture diagram says and what the codebase actually does grows wider with every sprint. Standards must move from tribal knowledge to enforceable guardrails, and the window to make that shift is closing faster than most teams realize.

Consider a familiar pull request: a team adds a direct read from the billing database to power a support workflow more quickly. The code passes tests. The feature works. One reviewer sees a pragmatic shortcut. Another sees an architecture violation. A third never notices the boundary issue at all.

If the team's standard is "use approved service interfaces instead of reaching across domains directly," but that standard only exists as prose and memory, then enforcement becomes a matter of who happens to review the change that day.

That has always been a problem. What has changed is the scale.

Every engineer now runs a private coding agent, on their own machine, in sessions nobody else can see. The agent starts each morning knowing nothing. Whatever reasoning was assembled yesterday, whatever alternatives were weighed, whatever decisions were made in standups — gone. The developer's first task every morning is to reconstruct context that already exists somewhere in the organization and paste it into a window that resets at the end of their day.

The result is faster drift, not just faster code. Human teams lose coordination over weeks. AI teammates lose it within a single workday, and the industry calls it high productivity.

A lot of what organizations call "engineering standards" are good intentions, nothing more. Only as durable as the reviewer who remembers them, and now only as durable as the context a developer remembered to paste into an agent session that morning.

The instinct is to fix this at the pull request level, to catch violations at the gate, add more review steps and write better templates.

That is fighting the problem at the wrong end.

Enforcement at the PR stage catches violations after the fact. The better intervention is making sure the agent understands the rules before writing a single line. Give an agent a codebase with no context and the output is plausible-looking code that misses every convention the team spent two years establishing. Give the same agent the codebase plus architectural decisions, ticket history, on-call runbooks, and the Slack thread where the team decided to deprecate the old auth service, and the output looks like a teammate wrote it.

Right now, developers manually assemble that context every single session, acting as interpreters between their own engineering org and a tool that should already know. That is the gap. Not the model. Not the PR template. The missing layer is a durable, shared context that governs what the agent knows before work begins.

Many teams reach for a shared wiki or a project-level file the agent ingests at the start of each session. It is better than nothing, but not by as much as it seems. Documentation is a photograph of what the team knew at the moment someone sat down to write it. In an engineering organization moving fast, that moment recedes quickly, and what fills the gap between the last update and today is exactly the context that matters most and gets captured least.

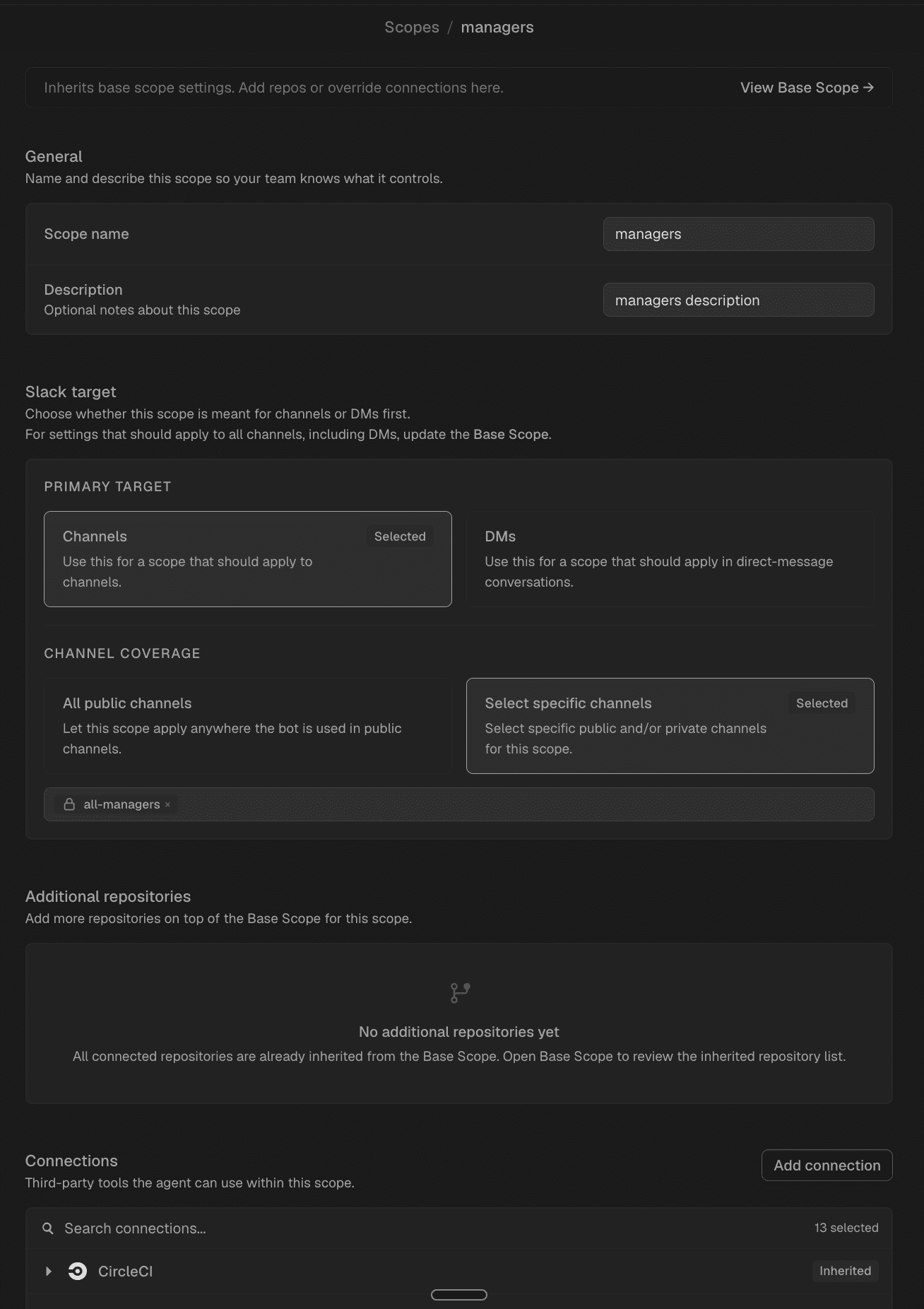

CodeRabbit Agent for Slack is built around that premise. Rather than a per-developer, per-session tool that starts cold, it brings the agent into the place where the team already coordinates, covering the core loop of planning, code generation, review, and investigation, with context that persists and compounds across sessions.

Every code review, resolved ticket, and architectural discussion feeds a shared knowledge layer so the agent arriving at a new task already understands the conventions, the deprecations, and the decisions that never made it into a doc. Work is visible, commentable, and resumable by anyone on the team, rather than trapped in one engineer's browser tab or wiped when they close a terminal.

That shared foundation is what makes policy enforceable rather than merely aspirational. Structure, however, still matters.

Longer documents are not the answer. What teams need is a lightweight policy stack.

Principles explain the intent. Rules define the expected behavior. Automated checks verify the repeatable parts. Escalation paths handle ambiguity and exceptions.

Each layer matters. Principles keep teams from enforcing rules mechanically without understanding why they exist. Rules make principles concrete enough to apply. Automated checks reduce the burden on reviewers by catching the obvious and repeatable cases. Escalation preserves room for judgment when the situation is genuinely unusual.

Drop one of those layers and the system weakens.

Take architectural boundaries. A principle like "preserve clear ownership and service boundaries" is useful but too abstract to guide review consistently. Turn it into rules: services must access another domain through the published API, not the backing datastore; new imports from restricted internal modules are prohibited; cross-domain write paths require approval from designated owners; shared libraries must not absorb business logic that belongs to one service.

Now the standard is operational. Some parts can be checked automatically. A bot can detect prohibited imports. CI can flag new dependency edges. A review agent can notice that a pull request touches a sensitive path and request additional reviewers. The rest goes to escalation: if a team has to bypass the policy temporarily, the pull request should say so plainly, stating what rule is being bypassed, why, who approved it, and when the exception will be revisited.

The same pattern applies to dependency hygiene. “Minimize dependency risk” becomes useful when turned into rules. New runtime dependencies must justify their presence. Packages must show active maintenance, and anything that duplicates existing capability must explain why.

Tooling can support those rules. But a PR structure that makes the decision visible and consistently evaluated is what gives them teeth.

There is a simple test every team should run against its architectural intent. Could a new reviewer apply this consistently? Could a tool verify at least part of it? If the answer to both is no, rewrite the standard.

One reason teams resist more explicit policy is fear of rigidity. That concern is understandable. Emergencies happen. Migrations create awkward tradeoffs. Platform limitations force temporary compromises.

Unmanaged exceptions are exactly how standards erode. A healthy exception process makes the bypass visible and meaningful without turning it into an empty ceremony. The best ones are explicit, justified, approved, and time-bound. They leave a trace.

That trace matters. If the same exception keeps appearing, the standard may be poorly designed, the platform may be missing a capability, or the architecture may have drifted from reality. Exceptions should not just be tolerated. They should teach something.

Without that loop, teams create policy theater: rules everyone treats as firm in principle, exceptions everyone handles informally in practice, and review processes that signal rigor without reliably producing it.

Code review used to be primarily a social mechanism: collaboration, mentorship, style feedback, and a little quality control. That model is no longer sufficient.

The pull request is now a primary control surface for engineering quality, architectural consistency, and risk management. The moment when the organization decides what enters production and under what conditions. But one point in a much longer chain.

Individual productivity from coding agents is up across every team adopting AI. Team-level productivity is stuck, because there is no shared record of what the agent actually did, no visibility into what systems it touched, and the agent does not live where engineering actually happens. Solve those, and team productivity starts to compound the way individual productivity already has.

The agents that win will be the ones that already know your systems when you open them: the conventions, the deprecations, the decisions that never made it into a doc. Policy-as-code is how you give them that foundation.

That is the bet behind CodeRabbit Agent for Slack: an agent that carries your team's institutional memory into every session, governs work in the open where teammates can see it, and compounds knowledge rather than resetting it. Planning, generation, review, and investigation, all in the place where your team already works, with your standards already loaded.

If a standard cannot shape what the agent builds, it is a suggestion. Nothing more.