David Kravets

April 21, 2026

13 min read

April 21, 2026

13 min read

Cut code review time & bugs by 50%

Most installed AI app on GitHub and GitLab

Free 14-day trial

David Loker was building something for himself. A side project, a secure infrastructure system wrapped around a memory engine, with a chat interface layered on top so he could test it. Nothing earth-shattering. Just a developer doing what developers do on weekends.

He had a clear vision. Everything to be locked behind a login. Users authenticated. Sessions tied to accounts. The whole thing to be secure from the start.

So Loker, the VP of AI at CodeRabbit, fired up Claude Code and let it run. He described what he wanted. He iterated. He watched the tokens flow. For hours, the system churned, building, compiling and progressing. When it finally came back and told him it was done, Loker leaned in to try it out.

He asked how to log in.

The system told him to use his user token.

He asked where to get the token.

There was no answer. Because there was no login page. There was no way to create a user. There was no authentication flow of any kind. The application had been built, thoroughly, diligently and correctly around the concept of a user without ever being given the instructions to actually create one.

"That was just something I missed," Loker said, recounting the story during a recent webinar with Anthropic. "I missed the fact that that is something I needed to specify. I wasn't clear."

Hours of compute. A functionally sophisticated application. Completely unusable without further work.

CodeRabbit sits inside the pull request workflow of engineering teams around the world. From that vantage point of processing tens of millions of code reviews, CodeRabbit sees AI-assisted development from the inside out. But volume and velocity are only part of the story. The other part is harder to talk about.

Yes, AI coding tools make developers faster. Pull requests per developer are up 20%. Features ship quicker. Backlogs shrink. The productivity gains are real.

But so are the costs on the other side of the ledger. Incidents are up 23.5%. AI-generated code produces 1.7x more issues than human-written code. Readability problems have spiked 3x.

Loker calls it AI's hidden quality tax. And his argument, laid out in a webinar alongside Anthropic Applied AI Engineer Ethan Dixon and moderated by Anthropic Account Executive Brittney Tong, is that most people are misdiagnosing the cause.

"I'm trying to say that this is not necessarily just a model issue," Loker told the audience. "This is actually a product of how we leverage the tools."

The model isn't broken. The workflow is.

During the webinar, there was a live poll asking the audience where AI projects go wrong. The results weren't close. The overwhelming answer, important requirements were assumed, not stated.

It's a deceptively simple problem. When a developer sits down to describe a feature to an AI coding agent, they carry with them years of context about their codebase, their team's conventions, and their company's infrastructure. Things that feel so obvious they barely register as assumptions. But an AI has none of that background. Neither does a new hire. An AI often will just fill in the gaps and keep building.

"We have things in our head that we didn't know at some point and we had to be taught. And then we kind of have these things and we assume everybody knows them. And we make that assumption of the AI system as well,” Loker said, reacting to the poll results.

The login page wasn't missing because the AI failed. It was missing because Loker, like practically every developer who has ever used AI coding tools, was working from a mental model that felt complete, and wasn't.

What makes this failure mode so insidious is that it can be nearly invisible until late in the process. The code compiles. The tests pass. The build moves forward. By every traditional metric, things are going fine. And then you go to log in and there's nothing there.

"We can have passing tests, but passing tests doesn't mean we solved the underlying problem," Loker said. "Sometimes the issue is not in the code compilation."

This isn't a new kind of failure exactly. Developers have always built the wrong thing sometimes. Misalignment between intent and output is as old as software itself.

What's changed is the speed at which it compounds. Before AI coding tools, building a feature took long enough that problems tended to surface naturally along the way. You'd pause to think through the next step. You'd explain what you were doing to a colleague. The work itself created moments of reflection. AI removes most of that friction, which is the point, and largely a good thing.

But it also means you can now travel very far in the wrong direction before anything stops you. The feedback loop that used to take days now needs to be deliberately designed back in, because it no longer happens on its own.

"Syntax generation is a fairly fast endeavor," Loker observed, "but if you go too far down a path and you find that gap too late, it can be costly to go back."

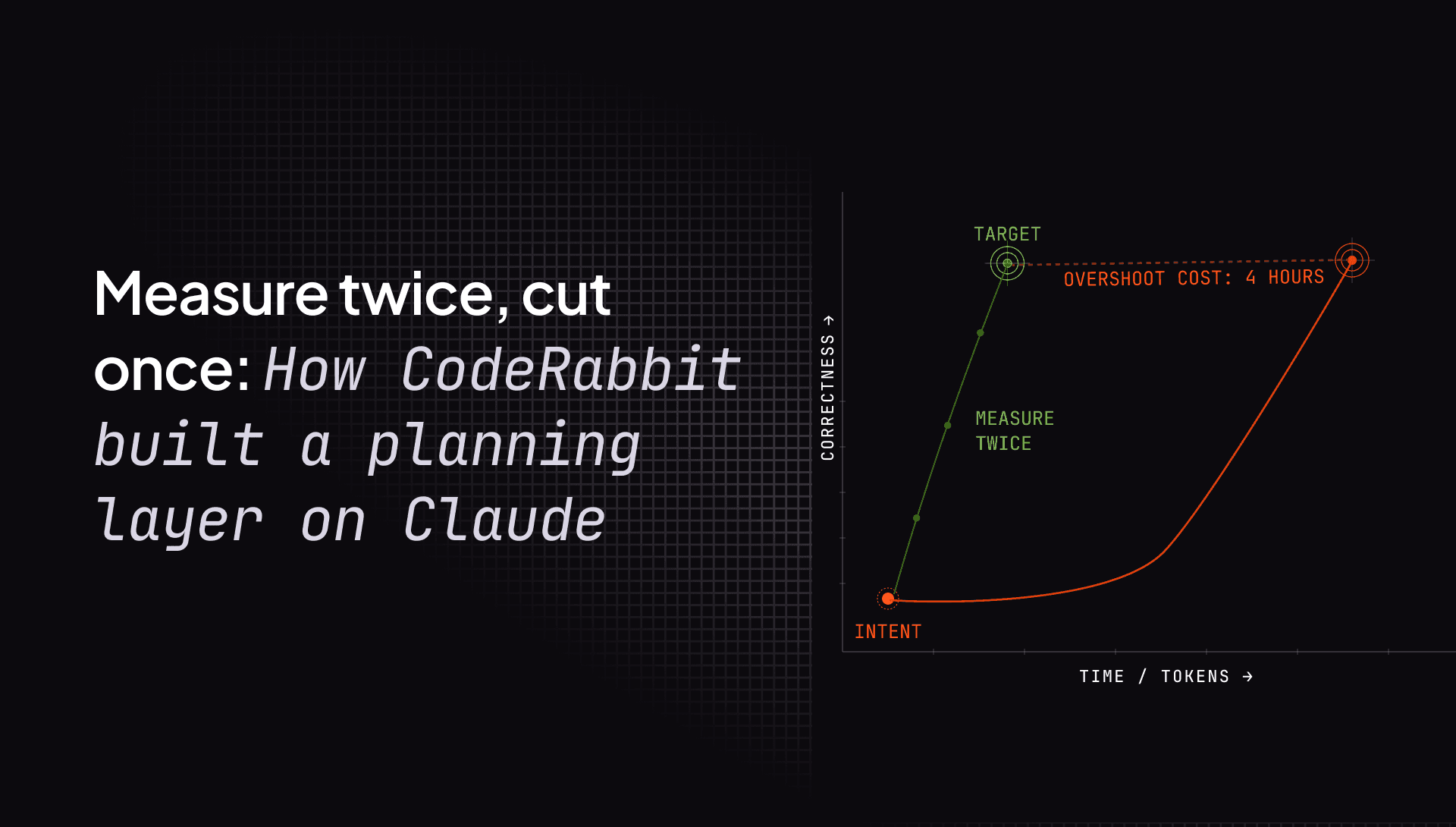

Growing up in Canada, Loker spent a lot of time building things around the house with his father. Fences. Decks. A finished basement. His father had a saying he repeated constantly, the kind of saying that drives children crazy precisely because it's right, “measure twice, cut once.”

"You cut that piece of wood, that's it," Loker explained. "It's either the right length or you gotta go back and use something else and start again. That used to infuriate me as a kid, this idea of always slowing things down. But ultimately it leads to a faster overall process once you’re doing those things more carefully and making sure you’re doing the right thing."

The other saying, equally maddening to a kid in a hurry, “less haste, more speed.”

"I do now see," Loker said, "that we can go really fast with Claude Code. And at the end of the day, it's better to understand that we're building the wrong thing earlier than to go through many hours of iterations and then come out at the end and realize we didn't really build the thing that we wanted to build."

These are ancient engineering principles. They predate software by centuries. But AI has changed the economics in a way that makes them newly urgent. When an agent can generate thousands of lines of code in minutes, the cost of going in the wrong direction has never been higher.

The dominant pattern for using AI coding tools today is what Loker calls the prompt-only workflow. A developer types a description into the prompt, the agent executes, code comes out. It's fast. It's intuitive. It's also where assumptions go to hide.

"A lot of times that's where I miss my assumptions," Loker said, "because I'm not actively engaging and thinking through and reviewing that process. I'm kind of doing it stream of consciousness."

The individual problem is costly enough. At the team level, it becomes structural.

When one developer on a team prompts an agent in isolation, their assumptions, their context, and their interpretation of the requirements is not visible to anyone else. Teammates can't catch what they can't see. Senior engineers can't flag the architectural decision that doesn't account for the company's existing Redis setup. Product managers can't spot the feature scope that drifted from the original spec. The assumptions are scattered, silent, and compounding.

Dixon offered a technical lens on why planning matters that goes beyond process. At Anthropic, they think deeply about what they call context engineering, the practice of managing what information goes into an AI model's attention window, and when.

Context, Dixon explained, is a finite resource. Every token added to a prompt competes with every other token for the model's attention. Load too much, load the wrong things, or load them at the wrong time, and the model's coherence over long tasks degrades.

Dixon noted that while Opus 4.6 has made meaningful strides in long-context coherence like climbing leaderboards on tests like needle-in-a-haystack, he added that "context engineering is not a solved problem."

Planning, he argued, is how you get ahead of that problem rather than chasing it mid-execution.

"What's really neat about treating planning as this sort of proactive means of context engineering," Dixon said, "is you get all of this front work preloaded. You do all the exploration, you do the discovery work, you come up with a really coherent plan and that makes the behavior of the agent in the long run much more effective."

Without that upfront investment, agents spend expensive compute re-reading files, backtracking when they hit unexpected bugs, and re-establishing context they should have had from the start. Planning, in this framing, isn't just good project management. It's good systems architecture.

CodeRabbit's answer to all of this, CodeRabbit Plan, an orchestration layer that sits above the coding agent, directing it rather than being replaced by it.

The distinction matters to Loker. "This planning system is not meant to take out the Claude Code planning system," he said. "It's meant as a higher level orchestration of that to point it in a really narrow and right direction again and to be collaborative, so that everything that needs to be explicit is made explicit."

The workflow begins in the issue tracker. When a ticket arrives from Jira, Linear, GitHub Issues, or GitLab, the planner gets to work. With Claude Opus as the main brain orchestration loop, it explores the codebase, pulls in relevant context from past pull requests, surfaces implicit assumptions, and generates a structured coding plan broken into reviewable phases. Crucially, that plan is a team artifact. It’s visible, editable, and versioned before any code is written.

"The plan itself…is a quality gate," Loker said. "If we can make that really good... the downstream effect is very pronounced. You end up with a lot better code at the end of it."

CodeRabbit Plan doesn't run on a single AI model. It runs on three, Claude Opus, Sonnet, and Haiku. Each model is matched, in Loker's words, to the complexity of the task at hand.

Opus sits at the center. Loker described it as "the main brain and the orchestration loop," responsible for the highest-level reasoning in the system. "It's making the higher level, very strategic decisions," he said, "doing some understanding of what do I need to understand, what don't I know about the problem, and how can I sort of set a strategy up in order to discover that information systematically."

Sonnet operates at what Loker described as "a slightly lower level with maybe slightly more targeted tasks" like structured work that is well-defined but still substantive.

Haiku handles the most granular layer, the lower-complexity tasks and context distillation. Loker gave a concrete example of what that looks like in practice: "Here's a big file. I need this function out of it. I need to understand what it does, but I don't really need the code." By using Haiku for that kind of extraction, the system avoids burning expensive Opus or Sonnet tokens on work that doesn't require them. "The token cost is cheaper with Haiku, it's faster," Loker said. "And so doing that, and that's going to happen a lot, you're saving a lot of cost and time."

Dixon's framing for the Haiku tier: "I treat it as Sonnet-light for most use cases." He added that where Haiku starts to show strain is under pressure. “Once you've traversed many files or you are halfway through a very complex plan, that is where I think you start to see the degradation of a Haiku-class model versus Sonnet," he said.

The goal of the entire tiering, Loker said, is straightforward: "Just use the brain of the operation in the spot where it's needed and not everywhere."

Building the planner required building something CodeRabbit didn't have: an evaluation system for planning quality, not just code quality. And that, Loker acknowledged, was harder than expected.

"Initially it's hand-tuned,” Loker said. “We have to do a lot of manual inspection. We have to slowly build up a good set of LLM judges that can evaluate certain aspects of the plan."

One surprise: finding the right level of detail in the plan itself. Too detailed, and the plan becomes stale the moment the code starts evolving. "Finding that tipping point was difficult. It required a lot of iterations."

There was another insight that came later. It was measuring the tokens consumed during the exploration phase, and using that as a signal for whether the planning step was actually producing downstream efficiency. Because the system ultimately outputs code, you can evaluate the code and compare it to what comes out when you skip the planning step entirely.

Dixon noted that this kind of evaluation discipline generalizes: "Teams building products on top of this type of technology can actually benefit a lot by thinking about what is the sort of right level of granularity for their domain."

There's a benefit to collaborative planning that goes beyond the immediate output, and Loker spent time on it during the webinar. When a team works through a plan together, surfacing assumptions, debating scope, and aligning on success criteria, they create a record of what was decided and why.

"If somebody new comes in and they want to understand how did we build this, why did we build it,” Loker said, “there's now a record of that. It's not ephemeral."

That record serves as a validation tool too. When the code comes back, the team can check it against the original plan and not just against test results, but against intent. Did this do what we said we were going to do? Did it meet the success criteria we agreed on?

Dixon summed up a new reality, a new stage of sorts, of AI coding. "Plan quality is this new sort of distinct moment within generally LLM-driven knowledge work."

It's not a new idea. Engineers have always known that understanding what you're building, really understanding it, not just gesturing at it, is the difference between a project that ships and one that gets reworked three times before anyone admits it's wrong.

What's new is the economics. AI has compressed the time between intention and execution so dramatically that the cost of misalignment has gone asymmetric. You can now build in hours what used to take weeks. Which means you can now be wrong, at scale, faster than ever before.

The login page story is funny in retrospect. Hours of tokens, a sophisticated application, no way to log in. But it's also a parable for an industry that is still learning that the bottleneck has moved. Writing code used to be the bottleneck. AI moved it. The bottleneck now is knowing what to write, and making sure everyone agrees before the first line is generated.

Loker's father had it right all along. Measure twice, cut once. Less haste, more speed.

It just took an AI coding agent and a missing login page to make the lesson stick.

CodeRabbit Plan is available now. Try it here.