Aleks Volochnev

December 04, 2025

7 min read

"Debugging is twice as hard as writing the code in the first place. Therefore, if you write the code as cleverly as possible, you are, by definition, not smart enough to debug it."

I've been programming since I was ten. When it became a career, I got obsessed with code quality: clean code, design patterns, all that good stuff. My pull requests were polished like nobody's business: well-thought-out logic, proper error handling, comments, tests, documentation. Everything that makes reviewers nod approvingly.

Then, LLMs came along and changed everything. I don't write that much code anymore since AI does it faster. Developer’s work now mainly consists of two parts: explaining to a model what you need, then verifying what it wrote, right? I’ve become more of a code architect and quality inspector rolled into one.

And here came a problem I knew all too well from my years as a tech lead:

As an open-source maintainer and senior developer, I had to review tons of other people's code, and I learned what Kernighan said the hard way. Reading unfamiliar code is exhausting. You have to reverse-engineer someone else's thought process, figure out why they made certain decisions, and consider edge cases they might have missed.

With my own code, reviewing and adjusting were a no-brainer. I designed it, I wrote it, and the whole mental model was still fresh in my head. Now the code is coming from an LLM and suddenly reviewing "my own code" has become reviewing someone else's code. Except this "someone else" writes faster than I can think and doesn't take lunch breaks.

AI is supposed to help, but if I want to ship production-grade software now, I actually have more hard work to do than before. The irony!

And that’s why, for my first blog post since joining CodeRabbit, I wanted to focus on that fact. This is also, incidentally, why I decided to join CodeRabbit. But we’ll get to that part later.

Here's where things get uncomfortable: we're human beings, not code-reviewing machines. And human brains don't want to do hard work, thoroughly reviewing something that a) already runs fine, b) passes all the tests, and c) someone else will review anyway. It's so much easier to just git commit && git push and go grab that well-deserved coffee. Job is done!

I went from “writing manually and shipping quality code,” to “generating code fast but shipping… bad code!” The quality dropped not because I had less time as I actually had MORE time since I wasn't typing everything myself. I just tend to “shorten” this verification phase, telling myself "it works, the tests pass, the team will catch anything major."

At this point, I was already using CodeRabbit to review my team's pull requests (as an OSS-focused dev, I was an early adopter), and those reviews were genuinely helpful! CodeRabbit would catch things that slipped through. Security issues, edge cases, some logic bugs. Those problems that are easy to miss when you're moving fast.

But here's the thing: those reviews were coming too late. The code was already pushed. Already in the repository, visible to the entire team. Sure, CodeRabbit would flag the issues and I'd fix them but not before my teammates had seen my AI-generated code with obvious problems that I didn't bother to review properly.

That's not a great look when you've spent decades building a reputation for quality.

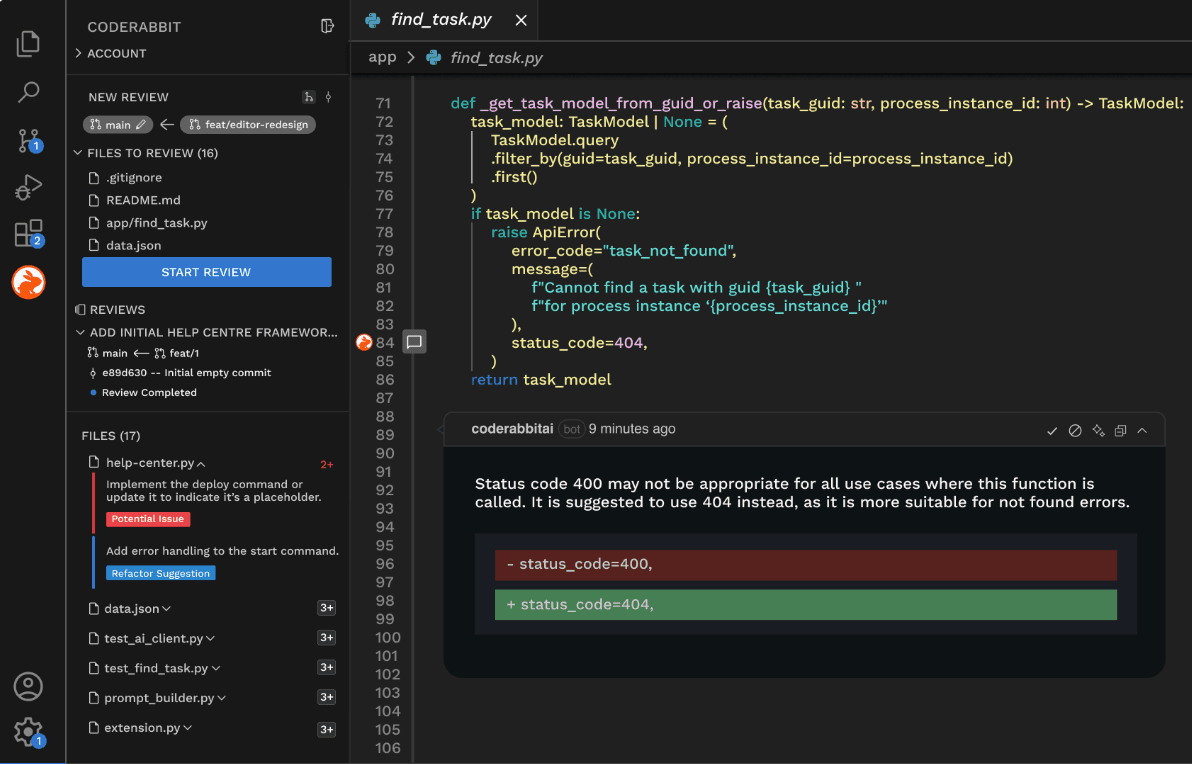

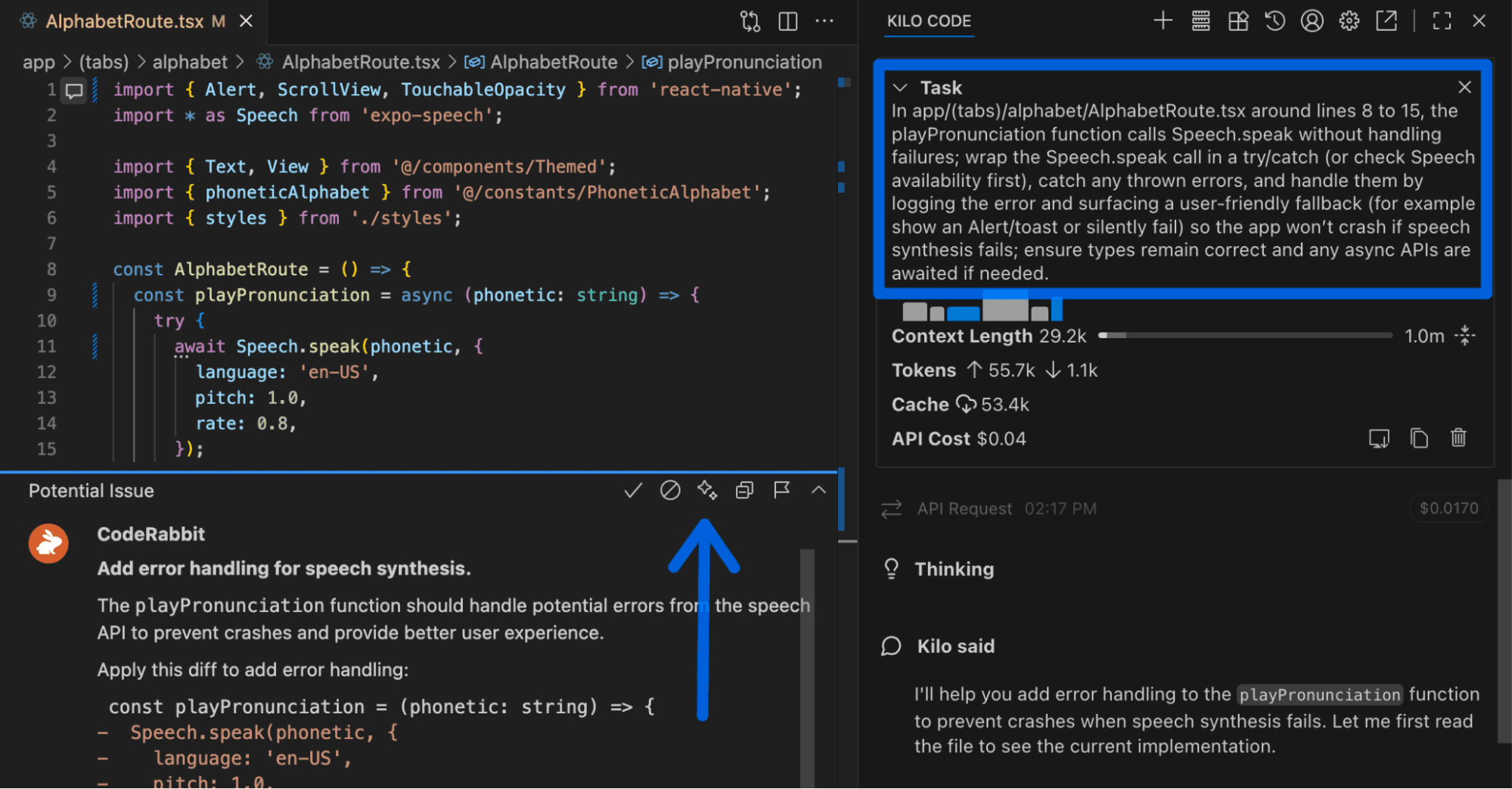

Then, I discovered CodeRabbit had an IDE extension. The AI code reviewer I was already using for PRs could also review my code locally, before anything hits the repo. This was exactly what I needed.

When I ask CodeRabbit to check or simply stage my changes, CodeRabbit reviews them right in VS Code, catching issues before git push. Now, my team sees only the polished version, just like the old days. Except now, I'm shipping AI-generated code at AI speeds. And I’m doing it with actual quality control. Automatic reviews mean no willpower required: I don't have to remember to run it, I don't have to open a separate tool. It just happens at commit time. Reviewing doesn't feel like plowing in the rain anymore.

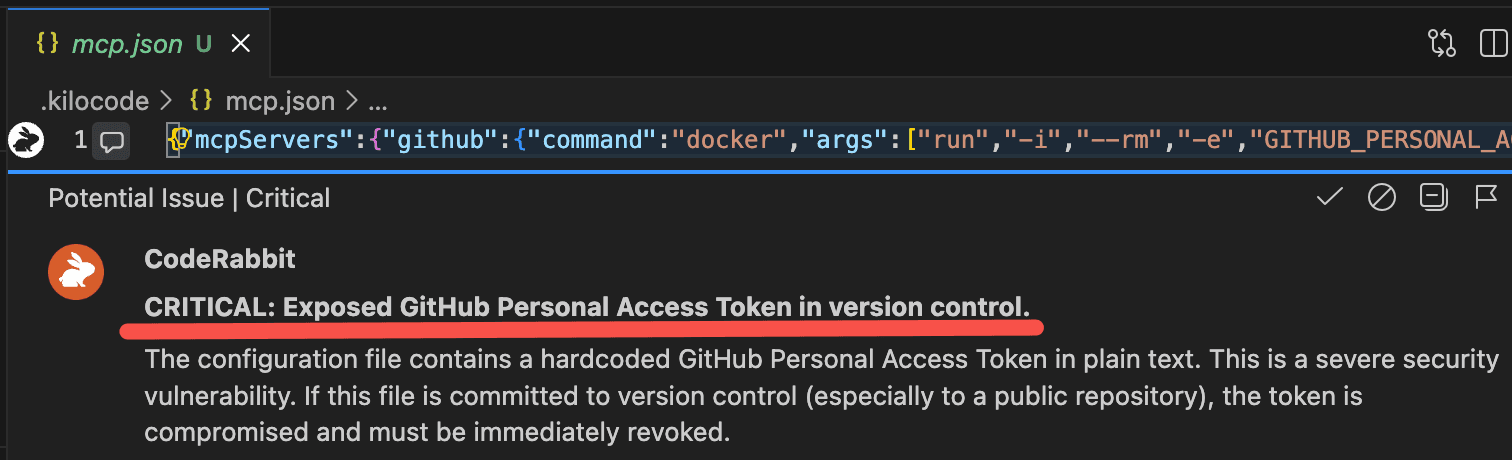

This gets critical when you're looking at potential security headaches, like the one on the screenshot. CodeRabbit caught an access token leak that could've been a total disaster! Issues like this needs to be addressed before that code gets pushed to a repository.

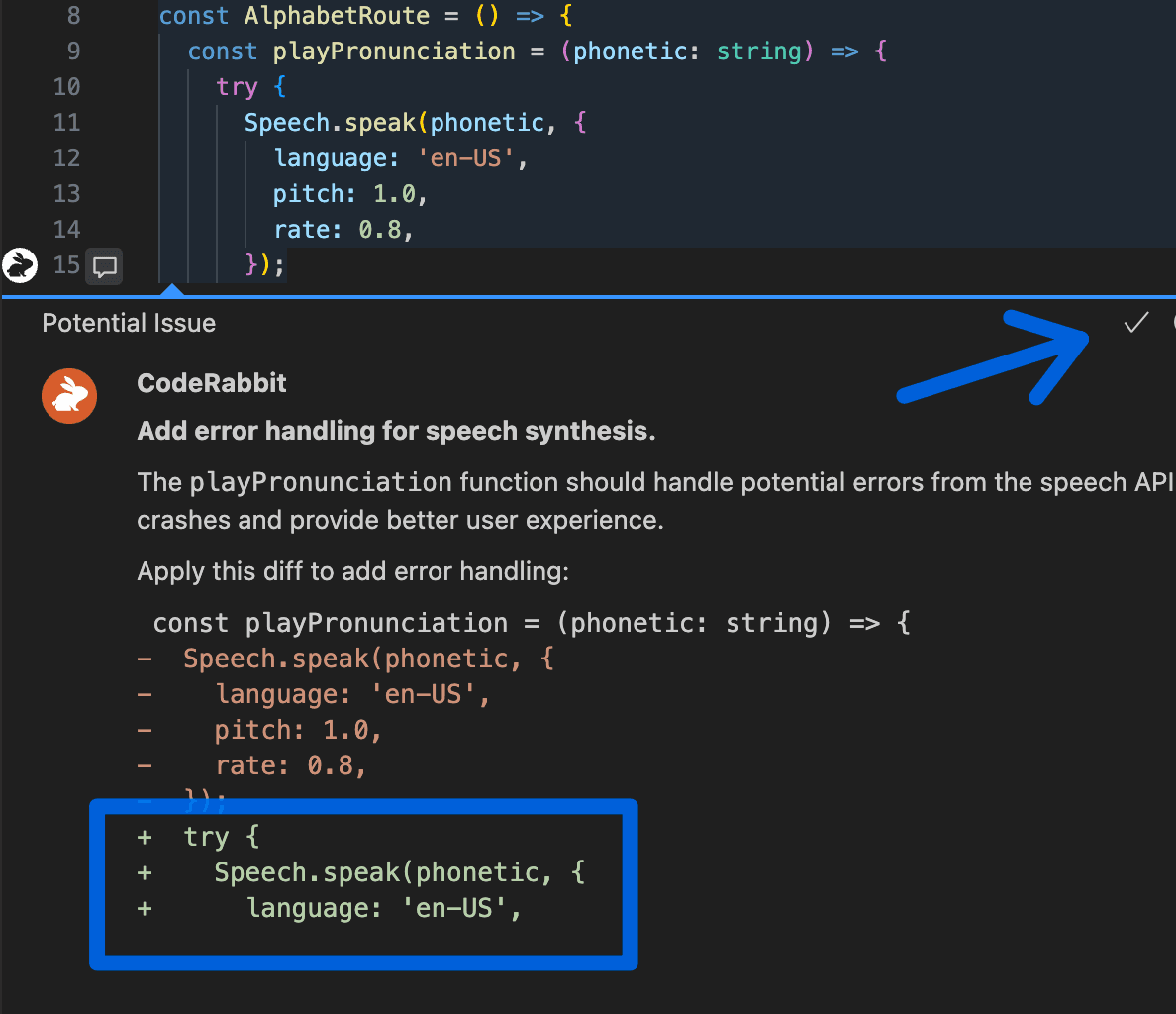

More than that, when it finds something, the fixes are committable. The tool doesn’t tell me to "go figure it out" but gives actual suggestions I can apply immediately, in one click.

For more advanced cases that can’t be resolved with a simple fix, CodeRabbit IDE extension writes a prompt that it sends to an AI agent of your choice. Fun fact: CodeRabbit is so good in writing prompts so I got a lot to learn from, improving my Prompt Engineering skills!

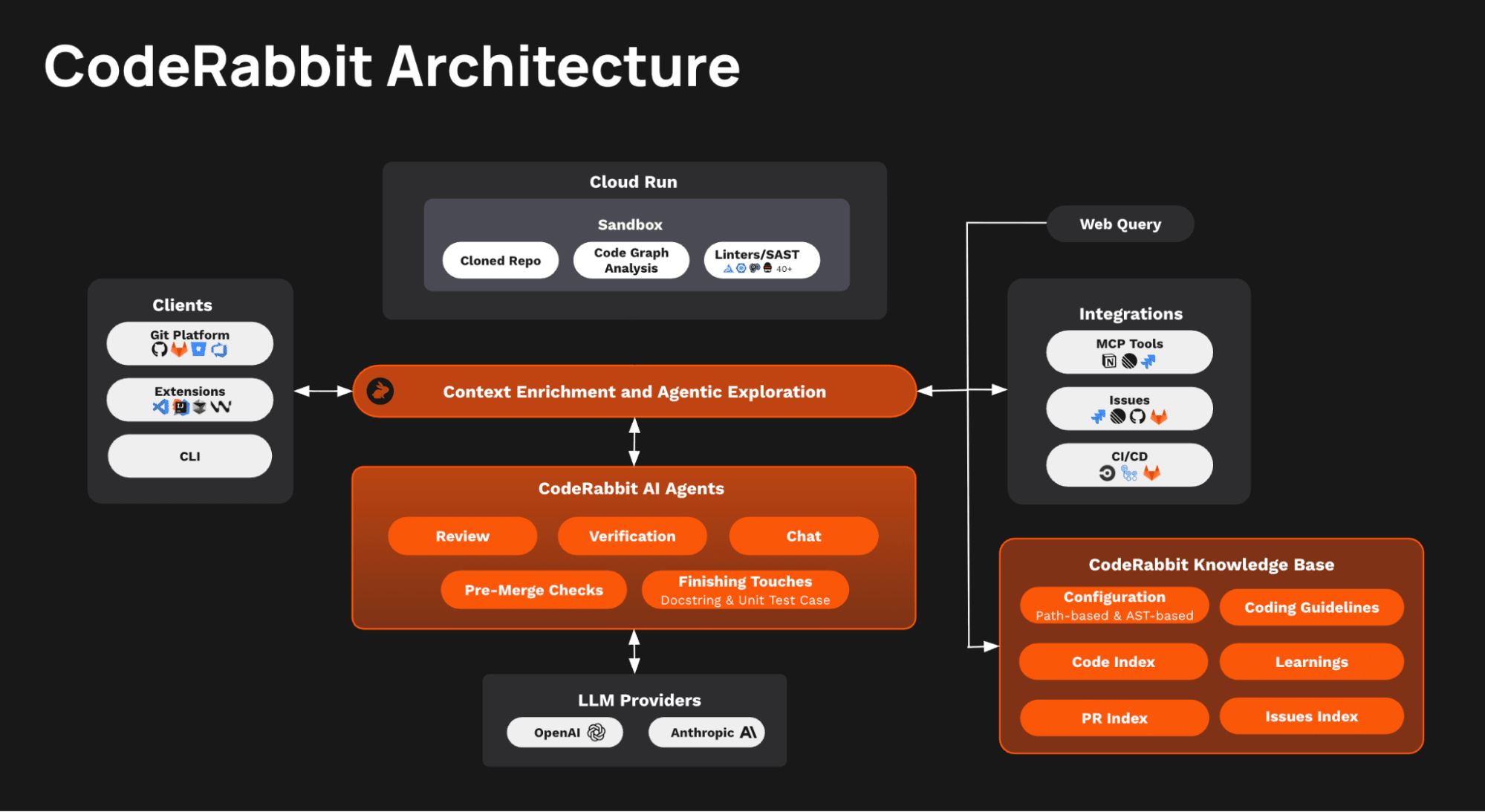

Even the free CodeRabbit IDE Review plan offers incredibly helpful feedback and catches numerous issues. However, the Pro plan unlocks its true power, providing the same comprehensive coverage you expect from regular CodeRabbit Pull Request reviews: tool runs, Code Graph analysis, and much more - there is a huge infrastructure behind every check!

Brian Kernighan was right: reading code is harder than writing it. That was true in 1974 and it's even more true now when AI can generate 300 lines while you're still thinking about a variable name.

We thought AI would make our jobs easier. And it does… if you only count the writing. But the reading verifying, reviewing, and understanding what the AI agent actually built? That got harder.

Many of us are doing 10x the volume at 10x the speed, which means 10x more code to read with the same human brain that gets lazy and wants coffee breaks. The solution isn't to slow down or go back to typing everything manually. The solution is to automate the code review process as thoroughly as we automated the code writing process. If your AI writes the code, another AI should be reading it before you get to it.

The quality of the reviews is why I recently transitioned from being a CodeRabbit user to joining the team. And that’s why you should also try CodeRabbit in your IDE. The free tier means there's basically no excuse not to try it. Your reputation will thank you.

Get started today with a 14-day free trial!